In this episode, Wael Abdelmalek, CEO and Co-Founder of Uthereal, gets brutally honest about what it takes to build a technical moat in the AI era – when anyone can ship a competing product over a weekend. Together with Paul Müller, Wael discusses why vibe coding without understanding the architecture can kill a product at scale, selling before the UX existed, spending $12,000 on sales emails with zero replies, and an enterprise demo that collapsed when a manager handed it to the wrong users without context. Plus the mindset shift that keeps Wael building through guaranteed failures and painful execution – this is not a journey for someone optimizing for happiness.

Daniel Dippold: Welcome to Been There, Done That. A podcast we launched to talk about raw, unfiltered founder stories. We listen to so many podcasts ourselves, and we figured that most of them talk about success stories – after they happened. And facts and figures get distorted, plus strategies that worked 10 years ago don't work today anymore. We at EWOR wanted to launch something that tells raw, unfiltered founder stories today.

And we believe we're in a unique position to do this, because every year we support 35 founders to build $10B+ companies. We do that as founders ourselves. 40% of our full-time team have launched companies valued between 100 million and 10 billion. Therefore, we want to talk founder to founder with people on how they got from zero to a million ARR or from zero to a seed round.

I'm Daniel Dippold, and I'm the host. I'm not a professional host, but I'm a former techie, a mathematician, someone who's built tech ventures for the last 10 years. And I want to talk techie to techie, founder to founder, with people who have what it takes to create a 10 billion dollar company. And I want to uncover the specific things they did in order to achieve that. And I hope that I can help you with those examples to build your own companies.

You’re listening to Been There, Done That, welcome to the show.

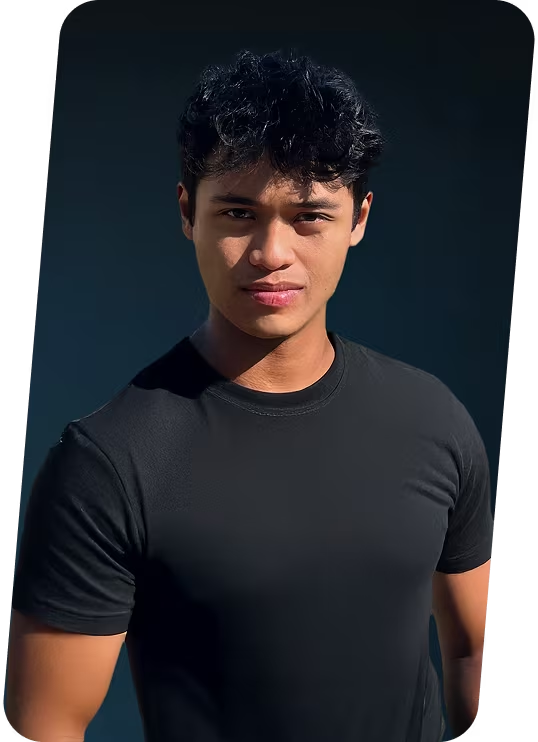

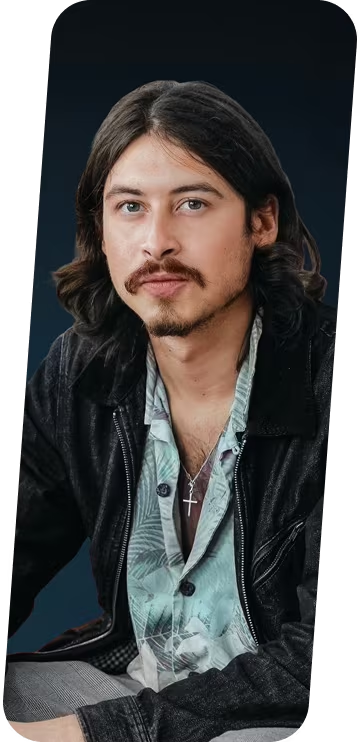

Paul Müller: Hi, welcome to another episode of Been There Done That. I'm Paul, Partner here at EWOR because I found a little software company called Adjust, sold it for a bit of a billion dollars. And today I'm talking here to Wael to hear from him how he's gonna try to do the same or even better.

Wael Abdelmalek: Yeah, super happy to be here.

Paul: Tell me a little bit about what it is that you're doing? Like, what is Uthereal doing? Like, so that people listening to this or watching this can get an idea of what we're even talking about.

Wael: Yeah, sure, so the obsession started when I joined AWS and started building solutions there. And basically, the thing I found most fascinating was the concept of infrastructure as code, the idea that you can write code and that becomes infrastructure, which then you can put applications on top of, and how amazing that is in building applications. And I thought about even one better, I thought about what if business also can become code. And I think we're heading in that direction. I strongly believe that business processes are going to become code in the very near future. A business can just write some code and create a new function or create a new capability, a business capability, not a technical capability. And that's what we're trying to do with Uthereal. We're trying to codify businesses, really understand some of the key business processes, build the technology and infrastructure that allows for that next wave to happen, and kind of be one of the first movers in that space. And that's what we're doing. That's what Uthereal is all about. We invested heavily in trying to understand how business operates, how to recreate the business value of code. And we're using AI to be able to deliver that. And that's what we do. We go to customers, and then we try to understand how they run the business, how they generate revenue. And then we say, if we build that business process with AI, you can still execute, and you can still scale it, and you can generate revenue out of it. So really, we're helping businesses move into the AI wave, really scale their services, and generate more revenue out of it.

Paul: All right. For the people listening, that sounds maybe a little theoretical. Can you give like one or two examples of how you find your first few customers? Because I think that's where a lot of people are struggling, right? When you're starting out, how do you get your first customer, second one, and so on. And, and what was the kind of value proposition that you went with to get these people, like, how did you convince these guys to join you? And what is it that you are actually doing for them?

Wael: Yeah, this is, I mean, the story of every founder, you have to dig through your network and you have to kind of start attending events. So I guess when we first came up with the idea, we were like, OK, how do we what does what does the product look like? So we started to focus on organizations that rely heavily on knowledge to deliver their services. Consultancy, for example, or legal. So one of our first customers was a supply chain consultancy. And it was a consultancy that has a really strong voice, a top voice in supply chain. They have 350,000 followers on LinkedIn. And then we went to the CEO and we're like, how do you like to scale your voice? You advise a lot of people and they advise the top supply chain companies like DHL and FedEx and all of these guys. So we said, OK, why don't you give us all the supply chain material that you have and the know-how? And the type of consultancy service you provide. And let's see if we can replicate that with AI. And that's exactly what we did. They were skeptical in the beginning. They were like, well, I don't know, is it really going to work? Is it going to be accurate? What if it hallucinates? What if it says stuff that's not me? And obviously we build the tech in such a way that that doesn't happen. And yeah, we said, OK, like we build this AI agent where some of their customers can actually say, hey, I have a supply chain incident or a problem. How do I go about it? And then it goes into like a very structured way into actually finding out your framework is wrong, your policies are wrong. You know, maybe there's something that, you know, you're not optimizing for. And we built this one agent that literally customer goes, upload their supply chain incidents, it goes through them and systematically says your framework is broken. This is a service that was costing $15,000. If you hired that consultant, the agent can get it. And they were very excited about it. They released it in one of the trainings and they actually got quite a lot of positive feedback. And that became one of the use cases that we started with.

Paul: I think a lot of people are struggling because when I talk to my fellows, you know, that we’re trying to help at EWOR to get on the road, specifically in the Ideation Fellowship. So many of them always ask me, it's like, oh, when is the right time when they can start selling something? Right? And I always tell them the same thing. You know, yesterday would be great. Today's the second best opportunity. Right? But how was it for you? Like, did you, did you sit down, build a product and then went and tried to give it to people, or did you just go to people with an idea, kind of you wanted to do and then sold that to them and then start building it? Like, what was your experience?

Wael: That's actually a very good question. We didn't start in a conventional way. We actually started when we were working, when we entered a hackathon. So the hackathon was Hack Zürich. It was one of the biggest hackathons in Europe. And we actually won multiple awards during that hackathon.And the hackathon idea was, people are gonna be in remote work, how can they get the best expert advice when they're not around? And we started building these expert twins. We picked one of the most expert employee in one topic and then we'll create an AI copy of them and we'll make them available through Slack. So like, for example, you're talking to them, they could be traveling, they could be on holiday, but their Slack is responding with what they know. So we won that year from three companies, Microsoft, Randstad, and Swiss Re. And then Swiss Re invited us to pitch it to them in their headquarters. And this is when we realized we have something. Like we actually have something. And this is, you already now build a product and you're pitching it. So you're kind of building sales mode at the same time. We scaled from there. Like, we were like, okay, this is something that somebody actually wants to know more about. So we started iterating on a product and coming up with new ideas and eventually started reaching out to our network and started presenting in some events. And I think the events and the exploring the network is really the real validation here. And I mean, I advise anybody who has an idea is to immediately engage with the customer, immediately engage with customers. Don't stay too long in your box building. There's lots of great ideas out there, but until somebody is willing to listen to you, you don't have anything yet.

Paul: And I mean, I can only, you know, remember from my beginning and I'm kind of curious to hear what was for you. But the first time you stand with the customer, you know, you know, you kind of ish have maybe a product ish that, I mean, basically you're just building that as they are asking for it. Like there's this feeling of imposter syndrome, right? Like that feels a little bit like a con man selling something that doesn't work, which I think a lot of first time founders are struggling with. How was that from your experience?

Wael: I struggle with that myself. I'm the kind of guy who would definitely present something when he knows he's confident about it. So for me, there's always a fine line, right? And everyone has their line, you know, differently. My line is, I know the core thing works, but maybe I don't have all the fancy stuff, the integrations and the UX and all of that, that's fine. For me, that's okay, that's my line. But the core thing works, which is that the AI works. So I was happy to kind of not stretch the product, but like, oh, can I hook it up to this? Of course you can. You know, for me, this was fine. And I feel like some people have it differently, but my confidence level, as long as I'm aware I can build a product, everything is within reach. And now even more so, with AI, you can build anything. So I think if you have the core idea and the core business outcome, right, you should be more comfortable now pitching that because actually the next feature is not weeks away. It's actually with AI now, it's like hours away. So it's okay.

Paul: Yeah, I think that's for me, the biggest difference, right? That divides the two of us. When I went into a meeting and talked shit, I actually had to build up myself, right? When I said, sure, we can do this. I was the guy that had to open the laptop and be, oh crap. All right. And I had to go back to the engineers and go like, guys, just maybe, maybe I sold something that isn't quite yet production ready or exists at all, right? And it was, it was a nice problem, right? And okay. Many nights of like sitting down, crunching, writing the code by hand, you know, like, like we did in the olden days, right? So I’m kind of curious that you were talking about it. Like now it's like hours away, but overall for me, it's still an interesting idea to think about it conceptually, right? That your execution speed is different, right? So maybe for me, it's kind of interesting. How does that feel? Right? For me, it was like when I, when I was sitting in these meetings and I asked, hey, can you do this, this, this, this, this, this? And of course you say yes. Right? But I knew every time, okay. That's like three weeks of like nonstop crunching of making that happen and just hoping that the integration process is slow enough, right? That them signing the contract takes a couple of days and sorting everything out takes a couple of more days more. That gives us that window to actually go there. But I think from your perspective, right? As you said, it's not, it's like ours, but how do you, how do you like deal with this? Like how does that, how does that feel on your side? I think that's the big difference between our generations of companies, right?

Wael: And this is why startups, pre-AI and post-AI are slightly different in a way. And I think, OK, so to answer your question, you feel like God in a way, because you can pretty much build anything. But then, actually, the size of the problem that you're solving is much bigger. It's where you need to spend the most time. It's actually much, much bigger. So maybe back in the day, only two, three years ago, pre-AI building everything, you would have a difficult conversation with a customer around some sort of integration, like if you want to integrate with five different payment providers. Today, this is three prompts, which is like four or five hours to get it working end to end. Before, that was like five months. And you had to do back and forth. So these conversations are easier now.

But the product scalability conversations when it comes to AI and these new architectures is what's actually very, very challenging. So I had an interesting conversation with a customer where they wanted to use our product. And they were like, oh, can I integrate it to this and to this and to this? And we're like, yeah, of course you can. This is two or three lines of code. But what if I threw in like 50 terabytes? How would it do? And then you're like, wait a minute. You see, this is now the level that you operate. It's very, very different. And you'd be like, oh, wow, I have to change some things here. So I think the level of challenge is definitely the size of the challenge is slightly different. The low hanging fruit, the small things are now much easier to do. But with AI, these new architectures and the scalability laws are very, very, you have to be aware of them. And it's not something that you can say yes or kind of blag it.

Paul: I mean, for me, one of the key differences that I also see from, let's say, this generation of startup is that architecture for how does your infrastructure look like will become more important, where at least in my humble opinion, a lot of the agents or LLMs that you can ask right now are not really well-equipped to think through the problem. I don't feel that they're giving you the full options, specifically as people don't know the correct question.

Because the question isn't like, hey, how do I pipe 50 terabyte through my Node.js backend, right? The question is, why the fuck would you want to use a Node.js backend for this one, right? But as LLMs are positively affirming, they will never tell you, hey, that was a terrible idea, mate. I'm like, you need to like take a can of gasoline, torch it, and start over, Which would be easy, right? Like that's something that at least, you know, you can say, hey, I'm going to rewrite this, I want to write it in Rust or go and make it faster. But I feel for me, that's the other side of what we're sending specifically as what I was building was ultra high performance, right? Like for us, margin on the COGS was do or die. That was the only thing that was keeping us alive was to keep the cost as low as humanly possible. So something looks serious as AWS was simply not in the cards for us. It was 10X too expensive for what we wanted to do. So we had to do everything ourselves in low level. And now, you know, that I'm on the other side of this journey. And I look at all the young ones starting over, I'm like, oh, well, you guys completely forgot how any of this works, right? And what happens if you pour a little bit of load onto these systems? So it's, I think that's for me, it's a little bit of the flip side of this, right? It's super easy to just make more code, but it is, I think, still as hard as it was 10 years ago, 15 years ago, when we started to ask the question, okay, how do I make this scale into like, truly like magnificent scale of like, we were tracking tens of petabytes a month, right? Where when I just chat with an LLM, how what design thinks, it has actually no real idea to do, right? So maybe another thing that for me is also the other side is we could build a technical moat, not just by infrastructure, but also just having, I don't know, a thousand partners integrated was a moat, right? Like no one else could just go and integrate a thousand partners because it would take forever to do so, right? Today, as you said, it's like a couple of prompts, a couple of links and just, you know, you do it. How do you think about like technical moats in the age of everyone can write anything at any time now?

Wael: This is a really good question and then this is something actually where I feel we've invested quite a lot of time. I think it's going to come back to the core principles in architecture because in any abundance of writing code, if you can easily build a monster of a startup that there is no way you can scale it. It's just way too much code and way too much bloat and it doesn't perform and I think today if you just rely on on vibing your way through you're going to hit a wall very very soon the minute you have one serious customer, right? Just to give you like a small example. We found out that we have to have two layers of backends for us to actually scale an AI application. We have to separate the application backend and the AI backend itself so we have two backends which is something I've never was really familiar with until we put it together be like, wow the challenge is different like like scalability and the different type of architectures you can have like you said agents are not reliable and you can't you can't trust them executing all the time so where are you going to put your security layer? Where are you going to put your guardrail layer? What does your scalability look like? Are you going to let these agents fire up these machines or are you going to have something that kind of sits on top of all of this? So I think the challenge to your point with the time of now we can write unlimited code is actually how are you going to architect all this code? How are you going to get this code to work with each other? How are you going to get your application to work with each other? And also it's also very interesting because now we can say oh I don't need a front-end engineer, oh but wait until you're you're you build like I don't know uh 500 pages worth of code who's going to manage all of this? Your vibe guy? If you vibe guy goes, you're dead. You're dead in the water and before you were writing all this code but there was somebody who knew why and because somebody wrote it there was something to explain why it was done this way. Today AI is writing it and nobody's explaining why AI wrote it this way. So how are you going to scale that? So it's become 100 times easier to start but to scale I think it became more difficult.

Paul: Yeah, I mean, that's something that I see with the fellows I'm advising, right? Like my point of view is usually like the, you know, old man yelling at the cloud, telling them, hey, base principles, guys. We have to go back to first principles, we have to you know, we have to do very boring black box testing, we have to do logic documentation so that whoever else is vibing this stuff together knows why all of this stuff is here, right?

We have to force some comments into there, not just for the humans, but also for the machines to explain why it was something implemented this way. And for me, it's language choices, framework choices, limiting also the degrees of freedom, which, you know, when I said Node.js is probably a terrible choice, the LLMs write horrible JavaScript, right? Because they were trained on horrible JavaScript that somehow they write even worse Python, no offensive language, love the language, but LLMs are trained on terrible researchers writing Python code, right? Like the worst people to write code wrote most of the code that trained the LLMs. And so now they write terrible Python code. And for me, it's like, let him write boring fixed languages, static, like GOAL or like Rust and, you know, turn on all the, all the validations you can, lint the hell out of it and then give very narrow specifications for design choices that you're allowed to make. And then maybe you have something that you can still vibe on in like six months from now, because otherwise, yeah, as you said, it's probably just growing into like this big ball of yarn that you could just kind of tangle yourself and there's no way you're going to keep maintaining that.

Wael: No, absolutely. And I think you kind of hit the nail on the head, because building on top of crap is eventually going to have more crap. Like we were we were trying to build this POC for a customer. And then we found out that just a very simple feature that we built on the front end just took ages and it was vibe coded. And we looked at the code and it was doing authentication six different times because we created six different boxes displayed in the pages. And it didn't realize that it's fine to do authentication once or authorization once. No, it was calling it the same function six different times because we're showing six different things and things like that a human wouldn't do. But you're building some of this crap in your own application and you have no clue why it's behaving the way it should be. So what we try to do, we try to really ground that, make sure that we're not mushrooming into like, you know, building some weird application at the end. So we actually give very specific, even for people using like Cursor and things like this, we're giving very specific instructions on how to build new features. And as we're writing good code, we're documenting it at the same place and we're telling AI to refer to that documentation before it creates new code, which pushes our standards. And that's very difficult to do. This was like, we did like a nice refactor at the beginning of this year. It's painful, but it's super strategic. And if we didn't do it, we wouldn't survive to the end of the year, I'm sure.

Paul: I mean, I've also seen people have some success with, with reviewing agents, basically in the CI pipeline, when you pushed at another agent, given some coding guidelines, best practices, reviews to coincide, like, is that fitting the schema because then it doesn't like this typical grading its own homework, which is always like, oh, it was probably correct. Maybe like, is it? And then for me, one of the other things has definitely been like end-to-end testing that I think is something that, you know, fell a little bit out of style maybe, because when I started, it was a big thing. And then in the middle, it's like, oh, we do everything with unit tests, where I see, don't let LLMs write their own unit tests, right? They will always pass and doesn't mean anything, right? But just to have like a black box test, assuming that you don't know what happens inside the backend, you just throw data in, look at the data out or the mutation, see if that works, if that's what you wanted and just ignore everything that, how it does it is the secondary function. But to check that it does that, I think that's something that I've seen to, to, um, definitely be helpful. But overall, I think from an investor's perspective, now there's a lot more skepticism about what is the technical moat, right? Even if we know, hey, there's still a moat, right? Like getting it to work on scale for more than one customer, it's not easy. But I think there's a general skepticism in the investor community now that says, hey, how do I know no one's just going to copy this thing in like three weeks because you know, they just found the right prompt to build the same thing. So, I mean, that's what we at EWOR kind of focused on is to say, okay, then what is left as a moat for us is like this commercial traction, right? To get in and say, if you manage to get money from someone for doing this, that means it's probably worth something, right? And the more people you get to give you money for doing this, the more validation you have, right? I think that's something that a lot of people that are starting out might not have, let's say super high up on their screen. They just want to build something really cool. How does it look from your side of the table saying, okay, you know, everyone has these expectations, no one believes what I'm building is unique and special. Everyone can copy it. So, how do you deal with that?

Wael: Yeah, this is definitely something that actually a lot of the startups in this whole AI startup era are all watching very closely, is when you look back in 2022 or 2023, when first ChatGPT came out, and then there was all these like startups, oh, talk to my PDF, talk to my Word document, and then next update, they all died.

So, and then the next round of that is like, oh, I can do this to create some sort of an image or whatever, again, new model, another layer dead. So obviously investors watching all of this, they have to be extremely skeptical now. They're like, what do you have that nobody else does? Like, how do I know that this type of service isn't going to be just a new update of a large language model? And we try to make ourselves also kind of very aware of this and try to create differentiators there. And then the way we did this is that by design, we're not some sort of a large language model wrapper. We would not, we don't, we use the large language model for maybe 10% of what we need. We invested our time building specific algorithms that break down knowledge very well and make it findable and force the agent to execute to a high level of quality when it comes to following their instructions. And almost like the iceberg, if you look at the iceberg, this is the stuff below the surface, and this is where we spend a lot of our time developing. Anything on top of that, but then we have to prove that this actually works very well. So when we went to customers with lots of content like publishers who have extremely technical medical content for 75 years worth, we said, look, this really, really works. Like, this will, if you put your publications here, you wanted to create some sort of a copilot, a medical copilot for a specific topic based on your content of thousands and thousands of books, this will work. You're not going to be able to do this in ChatGPT. You also have to kind of like prove that the tech also works. So when we go to these customers, when they tested it and actually qualified the quality of the product, that almost proved that we have something, you know, and then you repeat that across multiple customers. And then you'll hear the same thing. Like we have authors working with AI to test their own books, to test their agents that were created for them. And they are like impressive. Like, this is amazing. So I think the moat will have to come from you really being sharp focused on the value that you're providing, building technology that cannot not easily replicated, because it's a bit of science as well to do that. And then as fast as you can get these use cases and replicate them as fast as you can.

Paul: Talking about proving to customers that stuff works. How's your go to market in terms of like getting these customers to believe that your things work? I guess, typical proof of concepts and so on? Because I think there's a lot of people out there going like, okay, but how do I, I have someone that says, okay, this sounds cool, but what now? Right? Like, you know, I asked her out now, I got the first date. What do I do? Right? So maybe how's, how's that been from, from your perspective?

Wael: So, yeah, this is the challenge that I put for ourselves this year. It's like a very sharp focus on go to market and sales. So the name of the game for us this year is sales. So past the first few customers that we've landed, how can we get more of them? And that now made me create a sales machine. And that sales machine has many legs and I’m now discovering sales is actually not just art, it's also science at the same time.

So our go to market is we're using multiple channels to try to reach more people. And the first leg of this is automations. So I have a story. How can I create a sales machine that takes that story, goes on the Internet or goes in the market, and finds who in the same place wants that story and then I literally communicate it to them and try to put it in front of them. Whatever LinkedIn email and all of that and that is automation. So I actually managed to create that and since kicking it off two weeks ago, we already had four or five calls. We already have a pitch, two pitches already delivered and like a proposal is already going to be sent this week. So I was pleased. This comes after like you know being hyper focused on who you're going to reach out to what what do you want to tell them and that that's the first like the second leg is is partners which I'm actually realizing this is also important is finding people in the industry who you can partner with and they almost can be your cheerleaders because they you would explore their contacts and then they will put you in front of people. And I also kicked this off this year. So I'm working with somebody who actually is retired now, but he was a CEO of multiple publications and then now he's putting it in front of all his good old friends and that's very powerful. And then the third thing is founder-led thought leadership. So we went on a course, me and my co-founder, on how to post on LinkedIn and it all sounds silly but actually there's a course for that and we did a challenge where we had to post every day and look at our analytics and look at our stats to understand the LinkedIn algorithm. It's not just about posting. It's about looking at the analytics and see if your friends look at your posts, you're not doing sales. You want people outside your network to look at your posts. Well, how to get the algorithm to do that for you. We had to do a course on that. So it's actually and this is so founder thought leadership, events, presentations all of that stuff and then actually trying to hire really good salespeople, you know, which is actually not that easy. And we're now doing this. So this is my sales machine and every month I'm going to look at it. I'm going to say which leg is doing better and then I'm going to double down on it and I kicked that off this month and I'm quite happy with what I'm seeing so far.

Paul: I mean, it's nice to see that a couple of the things didn't change. I mean, yeah, you got definitely automation for outreach. I think one of the kind of things that I'm also seeing is that, um, the outreach part, something that a lot of companies at the beginning don't focus on because it's uncomfortable, right? It feels a little awkward to go talk to people that you don't know, to maybe pester some people that you're not feeling quite ready to approach yet. I think that's one of the base problems that a lot of people have, this of “build it and they will come” mindset. You know, I just built something really cool. I put it out there and then people will just show up. And I think the faster you can realize that that's not how any of this works. That's unless you go and shove it in their face, um, and explain to them why it is better, why they need this, what is what it can do. Um, I think it's going to be very difficult for a lot of people to, to get to that stage, to realize that maybe even, you know, you raise your seed round and you spend all that money on building something really cool and doing like one event here would have been there and just wait for that magical inbound sales to happen, but, um, I think you can agree, right? That's not how that works. It's like you have to go out and you have to shout from the rooftops about it. Um, but of course finding the right sales people has definitely been also one of our main challenges, right? Like there was something, despite me being the CTO at Adjust, one of the, the key points was to lead the learning and development department that was qualifying them, which was fun. But we found out that there's a direct correlation between them actually understanding technology and them being able to close and sell. And so I had the absolute joy of making sure everyone in the company had a basic understanding of the product, which sounds true, but we basically hire teachers, like real life, honest to God teachers and did three months drill sessions and role plays and the whole nine yards, just to get a salespeople to be able to sell such a technical product, um, definitely fun and very interesting for me to, to understand the psychological differences between the seller and like a techie, right? But you have to get enough technical knowledge into that seller. They just want to, they just want to make money, right? They just want to sell stuff. They don't care what they sell, but to get them to stop and go like, hey, listen, when someone says server, that's what they mean, right? And the click is that, the concept behind it functions like this. So, how do you deal with that today?

Wael: I have to say I'm quite impressed with you being a CTO and also leading sales people. Because to me, this is like a crazy mix-up.

Paul: But I was just teaching them. Don't think they were appreciating that too much but it was mandatory so they were on board with it.

Wael: I mean, like you said, sales, I find it very tricky too, so I interviewed a few sales people and the first thing I found out is that they're really good at selling themselves.

Paul: No surprise there, I guess.

Wael: So I was like, this is interesting because when you meet a few, be like, wow, they're all really, really good at convincing me that the right person, there's something wrong here. And then you talk to other people who are like, oh, I spoke to you and you were like, no, no, no, they're good at selling themselves and you were like, all right, so there's another layer to this. So I have to actually do a little bit more homework. So I now have like 2-3 in the pipeline where I'm actually trying to find what they'd worked on in the past and I'm trying to get, you know, like some recommendation, like was he really good? Was he really good as what he said? But then I started to dig a little bit deeper and I found that some will say they were great and some will say they were great and they will put numbers on their CV of when did they join the organization and when it started and when it finished. And I started now looking at these numbers differently also because I can then validate that number. And that to me is that I can, like something I learned from looking at the CVs and haven't done the interviews and found that they're all really good at selling themselves. So I am, I am, like I said, I have like a couple in the pipeline. I'm going through the process of validating them. But I completely understand you, like there's, you're going to have to give them the right language because I was in organizations, very technical organizations, some of the best cloud organizations and you have a sales guy comes in a call, says a couple of things and everybody cringes because the customer unfortunately was also technical. And when you hear these words, they're like, you know, that's not going to do what you can't have that person then. So I've learned the hard way having these people in the call that you can't send them, you can't set them loose either. So there will be like, whoever I'm going to bring aboard, there will be like very, very close, you know, mentorship and making sure they say the right things. But also our sales technique is slightly different. So I now go to sales meetings with 20% of the product for that customer kind of built. So I don't spend time convincing. I spend more time showing. So the last customer that we were building like an AI agent for, I actually built what it would look like. And I took a few of the content from the website and I put it in there and I actually showed them a demo. It's like, hey, you can put one thing in there, but by the way, you can put like a million things in there. And that's what it's going to look like. Sales calls have become so much easier because if I still go and show slides, it's a very long winded. Now they've seen the product and the first comment was like, oh, I like that.

And when you hear that, that's like 50% of the tough job is done. What's left is you keeping your composure and actually understanding how you're going to close them. And that's what you should be focusing on. So I think the approach I found in sales is like, you just have to be more and more proactive. I just learned it's like, it's a bit of an art. I have to keep trying.

Paul: Yeah, I think the rule, you know, show don't tell – when I think that's something that I mean we talked about it, right? It's also something that I talked to a lot of the EWOR Fellows about people showing up with a slide deck and 50 slides deep hammering on about why their product is better I think we're definitely past this point right now on one end the attention span of people is reducing.Yeah Now everyone wants it in like three minute or you have to put a little Minecraft video underneath what you're saying so they pay attention, right? But I think we definitely you have to get to the point and like if you if you want to sell something to someone, you have to get to the point why on earth they should keep listening to you. Like much earlier, and now I mean at least we have the technology right like when we when we build our wonderful little minesweeper demos, right where we knew exactly which buttons worked and which ones might not have worked at all, right? We had to do this by hand which was rather, you know involved now, of course. You can just buy the demo and just be like that's how this is gonna look like. Yeah, as long as it looks like this in the end. It's all fine right, it's just for us, it was a lot more work, of course. Yeah, I had to put this together on the plane going to the customers like that KPI totally exists I just don't click on it.

Wael: It's kind of not fair, I know. Yeah, comparison. Like, yeah, and I feel like if, to your point, if we don't use that also as an advantage, even in the sales process, I think it's kind of a missed opportunity, right? Today, I can easily remix something that I've already built and say, hey, make it look like that. And it just makes it look like that. And that's, hey, that's 20% of your product. And by the way, here is a password, you can play around with it and then come back to us when you have a view. And that helps a lot. It helps with the trust as well. Because I think there's quite a lot of like, it's now also the competition is very high. Like I'm not operating alone, there's a million other startups that's trying to do the same thing. So how I kind of differentiate myself when I meet with a customer. This is one of the things I try to do.

Paul: I think for me, this comes back to the goal, of course. You know, you want to close that sale, right? So, which means you have to track these metrics. I feel that this bit of an, I don't want to say forgotten art, but definitely something a little bit of overlooked, like the old school just getting these metrics in. I think it comes from builders don't like to be quantified with metrics. Because when we were coding ourselves, it was like lines of code are a shit measure to see if you're productive or not, right? Maybe even closed tickets are not a good measure of how productive you've been. If you've done like a huge refractoring job and scaled out a system or something like this. And so, I think it comes from our nature as builders not to want to quantify people by numbers when in sales is probably the better idea to say, like, hey, this seller is closing, I don't know, 50%. And it's fulfilling his quota at 110%.Or this seller is only hitting his quota by like 60%, right? And maybe I need to replace this guy. And I think that's for me, it's been also from my perspective as a builder, right? That was very alien to me when we started talking about sales as whole, having hundreds of sellers and how to quantify this. Like, how do you make them successful, right? And for me, that has always been like, okay, just assigning a number to a person seems weird to measure performance, but ultimately I think sales boils down to what can they sell, right? I mean, there's the bottom line, right? And I think that's also something that a lot of people that when they come from a builder's perspective might not have that kind of mindset to go in and say, okay, I'm just gonna give these guys a number. I like a number, you stay, I don't like a number. I'll get someone else to try to get a higher number, right? So what's metrics look like for you in sales? Are you, what is the kind of the basics you're tracking? How's that look like? The, let's say, boring backend part of it.

Wael: So for us, what we do is when I have an outreach, I record that outreach. We now have a CRM system that we built ourselves, because I felt like I was using all the usual suspects. And I felt really restricted by them. So I just kind of spent a weekend. I was like, well, I'm not doing anything anyway. So let me build a CRM. And now I'm tracking outreach. I'm tracking the time between outreach and a conversation. And I think a positive outreach, meaning that they responded to us, or they replied, say, hey, this is interesting. And an actual conversation. I think this is the first metric, because I feel like, hey, this is an intent. And if we don't act quickly, we lose that opportunity. But then once we have that, we try not to have way too many calls before we get a yes or a no. And this is the approach I mentioned earlier. We go there with a kind of a ready thing. It's like, hey, this is what you want. And it could look like this. And then afterwards, it's now, they say yes, and then now it starts like the painful part. Now, this is like when you, unfortunately, it's not in your hands anymore. It's like when somebody else's hands, like for example, I have two offers out to customers, but they just went skiing for two weeks. And I'm now sitting like a sitting duck, like what can I do with this? And this is one of the things like the dormant stuff, the slow moving stuff. How can I close? How can I close? This is one of the metrics from offer to closing. I'm now monitoring this because I used to say, oh, we will talk to them. They would want it, but now I have to, I wanna make a million this year. How am I gonna get there? So it's the time between sending something out and getting a response. If it's a no, it's fine. Sometimes I want to do it, but we will do it in six months. Fine, I'll come back in six months. But that is to me a really, really key metric. And obviously the closing statistics tell me which route has been more productive. And these are, since I deployed the sales machine essentially like four weeks ago, two weeks, three weeks ago, I'm now looking at this. So which channel should I be doubling down on? Because I'm now in the process of bringin ong a salesperson and I really wanna have this data points or like go there, I found gold there, keep digging. So from now for me, from go to market to a win, there's two or three numbers in between. And these are the ones I'm watching very closely for now. And then once I have the sales execution, I will generate obviously more numbers. I'll be like, okay, how much more are we making? What is the cost of the deal? All of that stuff. But for now, I really wanna see the machine work.

Paul: I think we cover a lot of ground in terms of the things that you, you know, all the things that you did. But I think the main problem maybe for the viewers or listeners is this sounds all very good and very kind of shiny and everything's like kind of worked out well, but knowing at least from personal experience, that's rarely how this goes, right? Typically there's a lot of kind of corpses left and right on the way of the hard-learned lessons, right? So what would you say were the kind of low lights on this journey? Like how did you like, what were the things that didn't work on the way here? Because it looks like you learned a lot of things, right? And me on the other side of the journey, going like, that sounds like you ran your head into a wall a lot of times, right? So maybe look at this a little bit from that side, because I think that's also interesting for the people that want to know how to do it, because I think a lot of people talk too much just about the positive stuff, you know, and then it always sounds like this shiny, everything went easy, great journey where in fact, it's not right. And maybe for those wondering, it's like, hey, I'm feeling like I'm failing all the time. I feel like I'm just, you know, stumbling forward and this doesn't work. This doesn't work. Like, how's this been for you?

Wael: Absolutely, I mean what I put together now is a result of hitting the wall a couple of times even when it comes to what I've just explained what my sales machine looked like. We've struggled in the beginning with for example our branding and our messaging and we've had various times where we would just like speak to customers and they'd be like but what was it you guys do and and and we struggled a little bit with that and that was what that was like kind of I wouldn't say failure is like learning because like we we we were explaining our product like techies and and then we realized very quickly even though we could be talking to people that might seem techies they still don't they really don't get it. So we actually almost like started almost started over like we started we had a rebranding, we brought a branding of agency, another startup and they actually kind of interviewed us and we spent a month with them and then we came up with new messaging and that immediately improved the outreach.

The go-to market also failed miserably in our first one. Like we had we were part of this acceleration program and then they were like this like kind of go-to-market agency, overseas so cheap, like oh yeah we'll take your content and we send 10,000 emails not a single reply! And that made us feel so crap. It's like what 10,000 emails and not a single reply like open rate was like I don't know, 2% open rate and we were like this is horrible are we are we ever going to be successful? And then we realized wow the messaging they're sending this crap message we looked at what they were sending it was like what what the hell is this this is absolute garbage like you know and there's a reason why it wasn't working so we've learned a lot of how to be you know like in the beginning I think as a founder you kind of need to be in the details in a lot of places even if you bring somebody to help you, don't rely 100% that they're gonna get it right even though you're paying them to do so unfortunately you have to be hands-on so we ran this like thing for like three months and it cost us a bit of money and we were like this is absolutely terrible so now when we ran the automation again we were super hands-on we're working with somebody who's really good at this, somebody who's who's tried and tested. We've asked about them we looked at their stats they showed us their own stats rather compared 60% to open rate, 40% reply rate and like okay we did not do this before properly and no wonder we we didn't get it right.

So on the go-to-market and sales there was a there was a lot of learning this happens from August last year all the way to the end of the year four months of pretty much crashing and burning with this and uh finally got it right beginning of this year.

One other thing also when it comes to sales, we tried to go to the customer with the product and sometimes this is good enough to convince them but when they give that product to their users they're not transferring the message you've just sold them and there was there was a time there was a customer where actually it was actually a very important customer that we really wanted to work with and we built we built a product for them. And then we were talking to one of the managers there but he wasn't the user and he took the demo give it to his people and when we look at the logs, it was horrible. They they were not using it the way it was supposed to be. Imagine you asking ChatGPT, hey where did i put my keys yesterday? You know stuff, like this. So the feedback was not good. They were like oh, they didn't like it, it didn't do what they want. It was like what did they want? It’s like supposed to be an agent to solve legal problems but when we look at the logs they were asking it to like you know like to find books, to find files and archives or some things, but none of that stuff was in there. What did you tell them about the product? I was like, well I said it would do everything I’m like, but that's not what we built it for, you know, so not being involved in a part where you think the customer takes it to their own users and validates the product. We missed that. We got that wrong. We thought this was it. This was the happy destination. And unfortunately, this was a big mistake. So today we'll be very extra careful to who we talk to. And this was potentially, I mean, a customer kind of we will talk to again, but I mean this was a painful learning. The first version of our product was scalable, but didn't count for feature scalability. And we had to do a refactoring to fix that problem. And that again, was a really painful problem. It cost us like a few weeks. So yeah, there's no journey without falling in these issues across everything. We obviously hit some walls.

Paul: I think it's good to hear because there's so many people that just look at the results and like, oh, that's fantastic. And you know, you managed to get your first money in sales and you’re just thinking, okay, of course an arm and a leg to get there.Very painful. I mean, I can tell you good news. This is the next 10 are easier and the next hundred are almost automatic. I mean, there's some other fun stuff happening, but it feels more manageable as like chaos and just, you know, bad things happening, feels a bit more normal to you, at least that's been my, my impression of this journey to basically say, hey, you know, you just get used to dealing with this, but I think that getting into this groove to be like, okay, it's, it's supposed to suck, right? It's just this stuff isn’t supposed to work the first time around. If it works the first time around, you just got very lucky. And I would be, I would be almost paranoid about it nowadays, right? Like today, I'm like, this works too well. Why is this working so well, right? This isn't supposed to work right away! That's where, where I think a lot of this today, when, when people see all of these nice shiny success stories and everyone's just leaving out all the parts that didn't work and all the parts that, that crash and burn on the way, I think that, that kind of doesn't really give you a realistic view of what you actually have to do, what's the job to be done for you. If you want to get there, That's where I think it's a good view, you know, to see for people to be like, Oh, this is supposed to fail all the time. Right? Just to eventually succeed.

Wael: Absolutely, I think I look this is okay. This is not a life for somebody who's optimizing for happiness. I'll be completely honest, the life of a founder, you’re not optimizing for happiness here. You're optimizing for learning. You're optimizing for growth. Growth is painful like you know there will be some really cool moments in this when you wake up and you get a really good email. Somebody's using your product and having a good time. But the majority of the time it's not that. It's execution, painful execution. There will be problems, that they're guaranteed problems and guaranteed failures. And you just have to kind of that day when you wake up and you feel you don't want to wake up. You're just gonna have to wake up. You're just gonna have to say, okay, look, I really don't want to get out of bed today But guess what? I'm just gonna do it because this is what I need to do

Paul: Yeah, I mean, for me, it's been basically finding new and exciting ways to get punched in the face. You know, it's like as you grow and you think, hey, this is everyone from the outside goes, oh, it's fantastic. You're doing like 20 million this year, you're going to 60 million next year. And internally just thinking, it's all on fire. Everything's just going to explode about any minute from now. My job is to throw myself onto the next grenade, force myself out of bed, put out the next fire and hope that this whole place doesn't burn down the next day. And as I said, it's the cool thing is also from your perspective, you're yet to find all the new and exciting ways things can break and explode in your face.

Wael: Can't wait!

Paul: I think it's been an interesting year. First of all, thank you for taking the time to share your experience. Also, you know, give some others a bit of a perspective on the journey, on what are the things that, you know, how's punching, getting punched in the face feel like from your perspective? Right?

It's amazing! 10 out of 10, would do again! And yeah, I think that's been another episode of Been There, Done That. So thank you guys for tuning in and see you next time.

Daniel Dippold: Thank you for listening to Been There, Done That.

If this conversation resonated with you, there are three things you can do to support.

First, you can subscribe and whichever platform you're listening to this, there should be a subscribe button. It would mean the world if you subscribe.

Secondly, you can share this with someone you believe would benefit from the content of this conversation.

And thirdly, you can go to ewor.com/signals and stay up to date on all things EWOR by subscribing to our newsletter. Well thank you very much for listening today and see you at the next episode!

In this episode, Wael Abdelmalek, CEO and Co-Founder of Uthereal, gets brutally honest about what it takes to build a technical moat in the AI era – when anyone can ship a competing product over a weekend. Together with Paul Müller, Wael discusses why vibe coding without understanding the architecture can kill a product at scale, selling before the UX existed, spending $12,000 on sales emails with zero replies, and an enterprise demo that collapsed when a manager handed it to the wrong users without context. Plus the mindset shift that keeps Wael building through guaranteed failures and painful execution – this is not a journey for someone optimizing for happiness.

In this episode, Ravi Teja Chadalavada, Co-founder and CEO of Sapios, talks with Petter Made about his signed $5M US government contract most VCs told him would never happen.

Ravi is a PhD robotics researcher who built the world's first fully automated driving test system – autonomous car technology squeezed into a phone with no examiner required. Getting there meant cold-calling 100+ driving schools to get one yes, then throwing out the entire sales strategy afterward.

It also meant turning down a 3 million Krona grant while unemployed, because accepting it risked losing his most important future customers. When visiting his sister in the US, he drove several hours to the DMV headquarters in Richmond, Virginia for a meeting that got rescheduled 10 minutes before he arrived. Ravi walked in anyway and waited 6 hours in the car park until they could fit him in before his flight back to Stockholm the next day.

That 30-minute meeting turned into a $300,000 pilot, 2+ year partnership, a $5M contract, and 27 states in the pipeline. In this episode, Ravi breaks down every step of how he proved the B2G skeptics wrong.

In this episode, Bjol Frenkenberger, co-founder and CEO of Sybilion, talks with Daniel Dippold about the structural decision at the heart of his $4.2M seed raise: whether to wait for EU Inc or flip to a Delaware C Corp.

Bjol entered university at 12 and finished with an Oxford PhD on uncertainty and decision-making. He’s now building Sybilion – forecasting infrastructure that turns over a trillion data points into the signals decision-makers need.

When the seed round came, it forced a decision every European founder building for global markets will eventually face. Bjol makes the case for why EU Inc, however promising, was not ready – and why Delaware's consistency and reputation ultimately won out despite the political climate and the four months of confrontational shareholder negotiations it took to get there. He also opens up about the investor traps most founders only discover too late, and what it actually cost him to close a round while still being the only person selling: an emotional limit he did not see coming.

In this episode, Jan Löwer, Co-founder and CEO of deeplify, talks with Daniel Dippold about the unfiltered reality of pre-seed fundraising that never makes it to LinkedIn. Jan is one of the rare founders who successfully transitioned a service business into a product company – and the fundraise that followed was anything but smooth. 2 months after joining EWOR, his CTO became seriously ill and left overnight, forcing Jan back into engineering himself and bringing sales to a complete standstill. Four weeks of back-to-back investor meetings passed before he realised a single framing error had been making the market sound 80 times smaller than it was. Then, in the final week of signing, one investor dropped out having misread the term sheet for months. Jan tells the full story in this episode, every messy step of it.

In this episode, Julian Rothenbuchner, Co-founder and CEO of Tumbleweed, talks with Daniel Dippold about the real cost of rejection before you break through. Julian is a rocket scientist building the infrastructure that could make manufacturing in space as accessible as mailing a package, with a SpaceX partnership to show for it. He opens up about the mental breakdown that followed SpaceX's initial rejection, six co-founder splits including a romantic relationship that didn't survive the pressure, and getting rejected by EWOR twice before finally getting accepted. Getting to yes cost more than anyone saw, and in this episode, Julian doesn't spare the details.

In this episode, Alfons Huber, Co-founder and CEO of REPS, talks with Daniel Dippold about what it actually took to protect his invention when the people he trusted most tried to steal it. Alfons invented energy harvesting technology 200x more efficient than anything on the market, with 90,000 trucks already driving over it at the Port of Hamburg. Getting there meant walking away from his degree after four university professors threatened to destroy his career if he didn't hand over his work. He left to start over in a 20 square meter lab with almost nothing on his bank account, ripped the motor out of his own washing machine to save €5,000 in research costs, and went two weeks without washing his clothes as a result. The people who tried to stop him were his role models. Succeeding required him telling them to fuck off in order to bring his invention to life.

In this episode, Josiah Senu, Co-founder and CEO of Zuba, shares the difficult decisions he had to make to go from being a Harvard law prodigy to building the payment infrastructure Africa’s never had. Together with Petter Made, Josiah discusses receiving hate mail after turning down a magic circle barristers' chambers in the UK, a failed business partnership that cost serious time and capital, the psychological whiplash of switching from crisis mode to investor pitch in five minutes, and why optimizing for hiring ‘nice’ people nearly cost him everything. Plus the fundraising insight that changed how Josiah sees the game entirely – there are no rules.

Meet Our Fellows

Great companies start with exceptional minds. That's why we invest early – when it's all about the founder. Already have €500k ARR, or no idea yet? Let's talk.